Server and Cluster Requirements

Before installing and configuring Graph Lakehouse, it is important to determine the appropriate size and scale of the environment, whether you are installing on "bare metal" servers, virtual machines, or using a cloud service. This topic details the minimum requirements and recommendations to follow for setting up Graph Lakehouse host servers and cluster environments.

- Hardware Requirements

- Software Requirements

- Firewall Requirements

- Virtual Environments and Cluster Configuration

Hardware Requirements

Altair lists above average production system hardware requirements as a guideline. Large production data sets running interactive queries may require significantly more powerful hardware and RAM configurations. Provision production server hardware accordingly to avoid performance issues.

| Component | Minimum | Recommended | Guidelines |

|---|---|---|---|

| Available RAM | 16 GB (for small-scale testing only) | 200+ GB | Graph Lakehouse needs enough RAM to store data, intermediate query results, and run the server processes. Altair recommends that you allocate 3 to 4 times as much RAM as the planned data size. Do not overcommit RAM on a VM or on the hypervisor/container host. Avoid memory paging to disk (swapping) to achieve the highest possible level of performance. For more information about determining the server and cluster size that is ideal for hosting Graph Lakehouse, see Sizing Guidelines for In-Memory Storage. |

| Disk space & Type | 40 GB HDD | 200+ GB SSD | Graph Lakehouse requires 30 GB for internal requirements. The amount of additional disk space required for load file staging and graph data persistence depends on the size of the data to be loaded. For persistence, Altair recommends that you have twice as much disk space on the local Graph Lakehouse file system as RAM on the server. |

| CPU | 2 | 32 or 64 | Once you provision sufficient RAM and a high-performing I/O subsystem, performance depends on raw CPU capabilities. Always use multi-core CPUs. A greater number of cores can make a dramatic difference in the performance of interactive queries. Intel x86-64 processors are recommended, but Graph Lakehouse is supported on Epyc and later generation AMD processors. Graph Lakehouse does not run on Opteron AMD processors or Mac ARM-based processors. |

| Networking | 10gbE | 20+gbE | Not applicable for single server installations.

Since Graph Lakehouse is high performance, massively parallel processing (MPP) graph OLAP engine, inter-cluster communications bandwidth dramatically affects performance. Graph Lakehouse clusters require optimal network bandwidth. All servers in a cluster must be in the same network. Make sure that all instances are in the same VLAN, security group, or placement group. In a switched network, make sure that all NICs link to the same Top Of Rack or Full-Crossbar Modular switch. If possible, enable SR-IOV and other HW acceleration methods and dedicated layer 2 networking that guarantees bandwidth. |

Graph Lakehouse requires that all elements of the infrastructure provide the same quality of service (QoS). Do not run Graph Lakehouse on the same server as any other software except when in single-server mode and with an expectation of lowered performance. Providing the same QoS is especially important when using Graph Lakehouse in a clustered configuration. If any of the servers in the cluster perform additional processing, the cluster becomes unbalanced and may perform poorly. A single poor performing server degrades the other servers to the same performance level. All nodes require the same hardware specification and configuration. Also use static IP addresses or make sure that DHCP leases are persistent.

To ensure the maximum and most reliable QoS for CPU, memory, and network bandwidth, do not co-locate other virtual machines or containers (such as Docker containers) on the same hypervisor or container host. For hypervisor-managed VMs, configure the hypervisor to reserve the available memory for the Graph Lakehouse server. For clusters, make sure there is enough physical RAM to support all of the Graph Lakehouse servers, and reserve the memory via the hypervisor.

In addition, running memory compacting services such as Kernel Same-page Merging (KSM) impacts CPU QoS significantly and does not benefit Graph Lakehouse. Live migrations also impact the performance of VMs while they get migrated. While live migration can provide value for planned host maintenance, Graph Lakehouse performance may be impacted if live migrations occur frequently. For more information about Kernel Same-page Merging, see https://en.wikipedia.org/wiki/Kernel_same-page_merging.

Advanced configurations may benefit from CPU pinning on the hypervisor host and disabling CPU hyper-threading. For more information about CPU pinning, see https://en.wikipedia.org/wiki/Processor_affinity. For information about hyper-threading, see https://en.wikipedia.org/wiki/Hyper-threading.

Altair can provide benchmarks to establish relative cluster performance metrics and validate the environment.

Software Requirements

The table below lists the software requirements for Graph Lakehouse host servers.

For container deployments, the required software and tuning is included in the Graph Lakehouse images. For RHEL/Rocky deployments, Pre-Installation Requirements provides details about configuring the required software on single server and cluster deployments.

| Component | Requirement | Description |

|---|---|---|

| Operating System | RHEL/Rocky Linux 9.3+ | Graph Lakehouse is not supported on Enterprise Linux 7 or 8. |

| Glibc-devel Library | Installed on all host servers | For compiling queries, Graph Lakehouse requires the latest version of the glibc-devel library for your operating system. |

| GNU binutils | Installed on all host servers | To compile and link programs, Graph Lakehouse requires the latest version of the binutils package for your operating system. |

| OpenJDK or GraalVM | Version 21 Installed on all host servers | Graph Lakehouse uses a Java client interface to access data sources. A Java 21 environment is required for using the Java client. Graph Lakehouse supports OpenJDK 21 and GraalVM 21. |

Optional Software

| Program | Description |

|---|---|

| vim | Editor for creating or changing files. |

| sudo | Enables users to run programs with alternate security privileges. |

| net-tools | Networking utilities. |

| psutils | Python system and process utilities for retrieving information on running processes and system usage. |

| tuned | Linux system service to apply tunables. |

| wget | Utility for downloading files over a network. |

Firewall Requirements

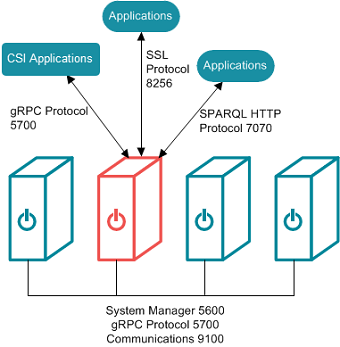

Graph Lakehouse servers communicate via TCP/IP sockets. Client applications connect to Graph Lakehouse using the standard SPARQL HTTP(S) protocol. Altair applications, such as the Graph Lakehouse front end, communicate with the database via the secure, encrypted, gRPC-based protocol.

For Graph Lakehouse clusters, all servers in the cluster must be in the same network. Make sure that all instances are in the same VLAN, security group, or placement group.

Open the TCP ports listed in the table below. This image shows a visual representation of the communication ports:

| Port | Description | Required Access |

|---|---|---|

| 5700 | gRPC protocol port for secure communication between Graph Lakehouse servers. | The following list describes the access that is needed for port 5700:

Make sure that the Linux environment variables http_proxy and https_proxy are not set on the servers. The Graph Lakehouse gRPC protocol cannot make connections to the database when proxies are enabled. |

| 8256 | SPARQL HTTPS port for SSL communication between applications and Graph Lakehouse. | The following list describes the access that is needed for port 8256:

|

| 7070 | Optional SPARQL HTTP port for communication between applications and Graph Lakehouse. | The following list describes the access that is needed for port 7070 if you have applications that will access Graph Lakehouse over HTTP:

|

| 9100 | The internal fabric communications port. | The following list describes the access that is needed for port 9100:

|

| 5600 | The SSL system management port. | The following list describes the access that is needed for port 5600:

|

Virtual Environments and Cluster Configuration

When your data loading and performance requirements warrant using a server cluster, the minimal size cluster you create should have no fewer than four nodes. When using a single node, data gets redistributed in memory without using a network. If you add one or two more nodes to create a two- or three-node cluster, data then gets distributed over the network to utilize the additional CPUs in the cluster. However, the CPU gain you would get from the additional one or two nodes added to the cluster does not outweigh the performance degradation that the network introduces.

Once you have created and are using a cluster, you can always provision additional servers to add CPU and memory capacity to boost performance and increase the total amount of memory available to load graph data. Graph Lakehouse requires that all elements the infrastructure provide the same quality of service. Do not run Graph Lakehouse on the same server as any other application software.